Nearly 70 years ago millions of American parents made an audacious decision that would have global consequences. These parents agreed to enroll their first, second, and third graders in a human experiment of unprecedented scale and complexity—the first field trial of Jonas Salk’s polio vaccine.

Preliminary tests suggested the vaccine was effective and safe. Nevertheless, the trial was a leap into the unknown. Would the shots really work? What adverse effects might lurk in the shadows? And why would any sensible parent let their child be the one who found out?

Retired physician and historian of medicine Bruce Fye has a simple explanation: fear. Both he and his parents were “petrified of getting polio.” Fye, then a third grader in Mansfield, Ohio, was a Polio Pioneer, one of the 1.6 million children who in 1954 participated in a two-month testing blitz that spanned 44 states.

Polio was a disease of summer, its attack as sudden as thunderstorms and as ominous. Yet the disease was relatively uncommon: cases averaged 20,000 per year in the 1940s and early 1950s, peaking at 58,000 in the pandemic year of 1952. The death rate in 1952 was around 5%; estimates differ on the chance of paralysis, but it may have been as high as 25% of cases.

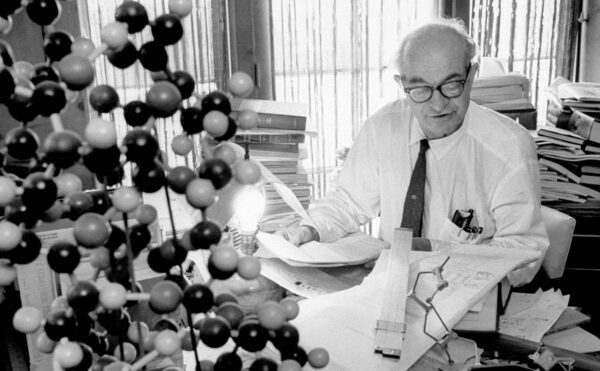

Nurse Grace Kyler working with polio victims at the FAMU Hospital in Tallahassee, ca 1953.

Nonetheless, everyone knew someone who had polio. Fye remembers a sixth grader at his school; I remember my kindergarten teacher in Connecticut, who wore a metal brace on one leg. The threat of a lifetime of physical disability for their children loomed over American parents, who had clamored for a vaccine for decades.

But within a few years the threat, if not the terror, had been thwarted. By 1961, six years after the introduction of the Salk vaccine, over 85% of American schoolchildren between ages 5 and 14 had received the required three doses. Cases of polio dropped from 58,000 in 1952 to less than a 10th of that number by 1960. By the end of the 1970s, the disease was virtually eradicated from the country. Today, polio is a distant memory for most Americans. That made the news all the more shocking last July when an unvaccinated man in Rockland County, New York, contracted paralytic polio, the first U.S. case recorded in nearly a decade.

In September, New York’s governor declared a state of emergency after wastewater testing revealed polio was silently spreading around the New York City metro area. Anti-vaccine activists, who had celebrated lowered childhood vaccination rates during the pandemic as “COVID’s silver lining,” accused New York officials of “trying to conjure up a polio outbreak.”

Outlandish on their own, these allegations ignored the fact that Rockland County, which has one of the lowest vaccination rates in New York State, had witnessed a measles epidemic just four years earlier. Now the county seemed primed for a polio outbreak to match it.

But there was a twist to the Rockland case that, on its face, seemed to bolster anti-vax skepticism. Testing revealed that most of the samples found around New York contained a type of polio that could only have come from a vaccine. Was this an alarming example of the cure being worse than the disease? As is often the case, the truth was more complicated.

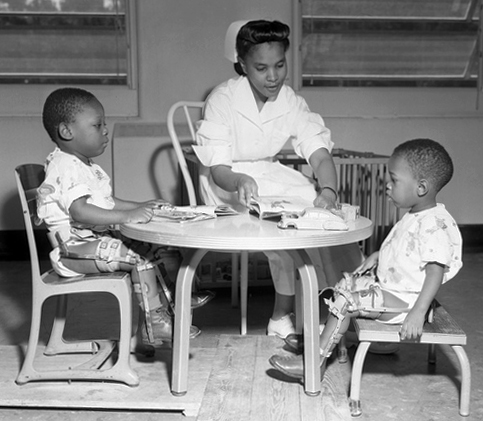

A polio patient in an iron lung, from a Max Cigarettes card series titled This Age of Power & Wonder, ca. 1935–1938.

To explain two apparently contradictory facts—that the polio victim in New York was unvaccinated but caught a disease derived from a vaccine—we need to look back at scientists’ efforts to tame polio in the first half of the 20th century. In tracing this history we find a very different public response to the science’s fallibility and arrive at another set of entwined facts: scientific understanding will always be incomplete, and partly for that reason our relationship to it is always changing.

Evidence of polio goes back to the ancient Egyptians, but for most of history the disease cropped up as small, scattered outbreaks. Polio didn’t really take hold in the United States until the 1890s, when public health measures that curtailed cholera and other waterborne contagions had the perverse effect of changing polio from a typically mild intestinal ailment most often encountered during infancy to a more serious illness that attacked older children and even adults. Without early and routine exposure that built immunity, polio, when it struck, was much more lethal. The emergence of polio coincided with growing consciousness of the germ theory of disease and the development of vaccines, such as Pasteur’s famous rabies vaccine. Many people expected a vaccine for polio would arrive sooner or later.

Nearly 40 years passed before a pair of researchers developed the first candidate vaccines. Both attempts failed, and in doing so, revealed a near-complete lack of rules for human trials at the time.

Philadelphia immunologist John Kolmer employed a weakened live virus in his attempt at a vaccine. After testing it on himself and his lab assistant, in 1934 he tried it on 25 children aged 8 months to 15 years, including his own sons. He obtained, he said, written consent from those parents who did not actively volunteer their children. How did he explain the risks of his vaccine? We don’t know. Kolmer went on to distribute the vaccine to almost 600 physicians across the country but provided no instructions on how to administer it. He also failed to establish a control group, and thus had no way of monitoring the vaccine’s effectiveness. Possibly 10,000 children received the vaccine; ten contracted polio, and five died.

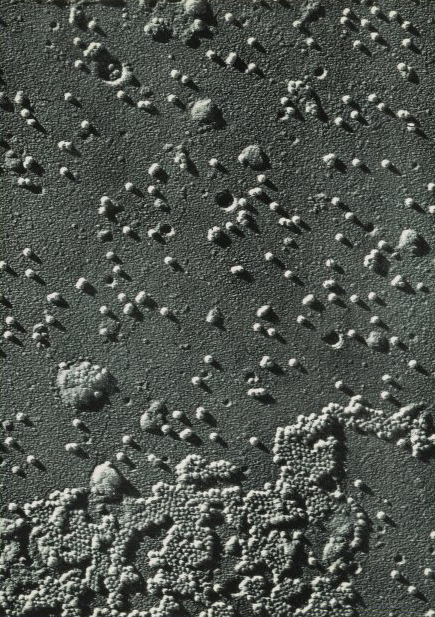

The first visualization of the polio virus through an electron microscope. Park, Davis, & Company, ca 1953.

That same year, New York virologist Maurice Brodie introduced a killed-virus vaccine, in which the virus was killed by formalin (a solution of formaldehyde) but retained its antibody-producing qualities. Unlike Kolmer, Brodie employed a control group that allowed him to test the vaccine’s effectiveness. Although only one of the 7,000 children and young adults who received the vaccine contracted polio, it remained unclear how effective the vaccine actually was because no one knew exactly how the virus operated in the body.

The consensus within the public health community was that Kolmer and Brodie jumped the gun in their attempts at a vaccine. The pair were denounced at a meeting of the American Public Health Association in 1935, with Kolmer receiving the harshest criticism. “All hell broke loose,” one participant remarked; another attendee called Kolmer a murderer. Development of a polio vaccine was put on hold.

When Jonas Salk of the University of Pittsburgh set out to develop a killed-virus vaccine in the late 1940s, this history was fresh. He was not the only one working on a vaccine. President Franklin Roosevelt, himself a polio victim, sponsored the National Foundation for Infantile Paralysis in 1937, and its March of Dimes mobilized millions of mothers to collect funds for research. Several investigators, many benefiting from these funds, competed to develop a vaccine, including teams at Johns Hopkins, the University of Cincinnati, and Lederle Laboratories in New York. As the postwar baby boom took off, anxious parents looked to science to ease their concerns about polio and other contagious diseases. The appearance of penicillin and other miracle drugs boosted public confidence that medical science would soon deliver a solution. But virologists were still puzzling over how best to attack the germ.

Billboard sponsored by the National Foundation for Infantile Paralysis (later March of Dimes), ca 1942.

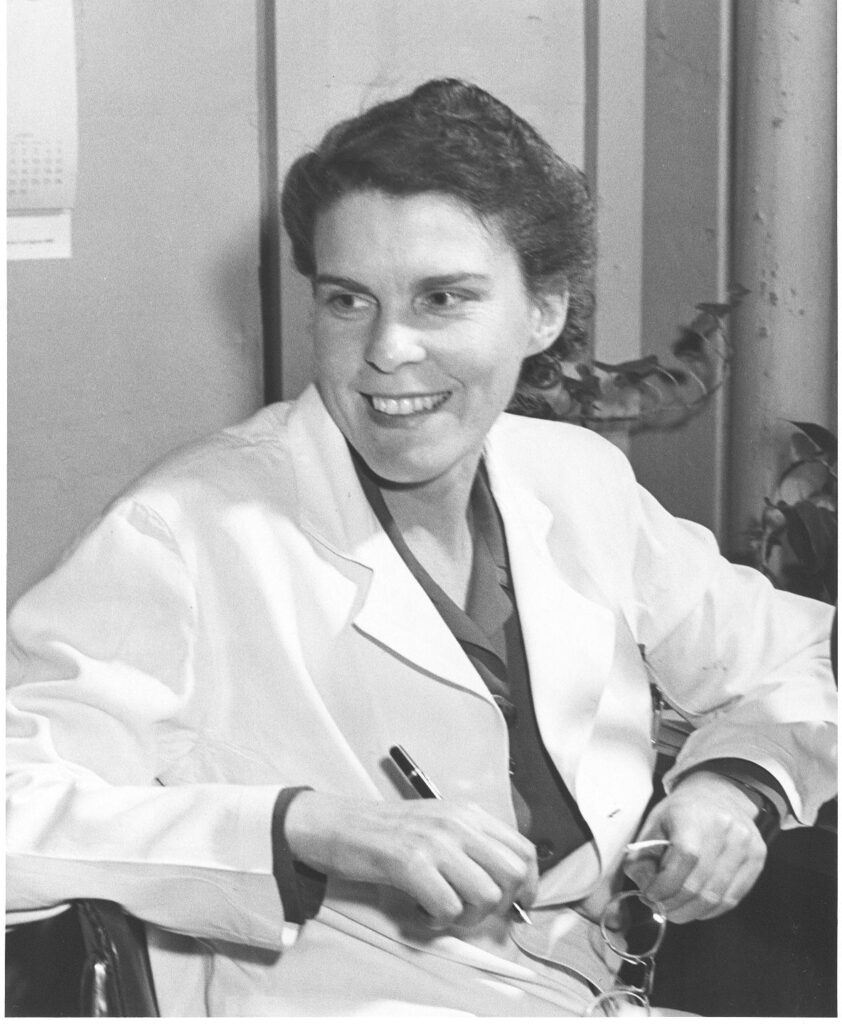

Most scientists were skeptical that killed-virus vaccines could lead to lasting immunity, citing Brodie’s failed attempt. Isabel Morgan at Johns Hopkins, however, believed that Brodie had simply used too much formalin to inactivate the virus in his vaccine, destroying its ability to provoke antibodies. Morgan developed a killed-virus vaccine employing formalin for one type of polio in the late 1940s that seemed to work in monkeys. Morgan was part of a team that established that there are three distinct serotypes of polio. An effective vaccine, therefore, had to work against all three.

Salk was the first to come up with a killed-virus vaccine successful against all three types. In 1952, he injected himself, his wife, his children, and several lab assistants with three doses of his new vaccine. He then turned first to a home for disabled polio victims to test antibody levels induced by the vaccine and then to a state school for the developmentally disabled for further trials. This was a common procedure at the time since the state, as guardian of the children, could consent to the procedures. While this seems shocking to us today, in the 1950s there were few legal protections for human experimental subjects.

Isabel Morgan, undated.

Salk presented his results early in 1953, and the National Foundation for Infantile Paralysis promoted the idea of a large-scale field trial to quell doubts about the vaccine among both scientists and the public. The foundation’s director of research answered criticisms that Salk was acting too soon by noting that all medical practice was a “calculated risk.”

Salk’s former mentor Thomas Francis, who a decade earlier had developed the first flu vaccine, was tapped to oversee the national trial. It was scheduled for the spring of 1954, ahead of the summer polio season. Francis, who had lived through the vaccine debacles of the 1930s, wanted to leave no loopholes. He favored a double-blind trial, in which half of the children would be injected with the vaccine and half with a colored-water placebo.

In terms of experimental models and statistical rigor, a double-blind test was by far the best option. To be successful, such a trial must also be randomized: the choice of who received the vaccine and who received a placebo would be as arbitrary as a coin flip. But there was a wrinkle. Because polio incidence varied greatly from place to place, children in the same grades and of the same age had to be matched against each other. The statistical task was daunting, and the logistics of injecting three shots into 650,000 children in 11 states was a nightmare.

That’s not all. Alongside the double-blind test, researchers conducted an “observed control” test to further minimize variables. More than a million children across 33 states were given neither a vaccine nor a placebo, but were merely observed.

Young girl receiving polio immunization injection at a mobile clinic, ca 1960s.

While the randomized placebo model was essential to eliminate the effects of bias on the results and would help to placate Salk’s scientific critics, in his words this “beautiful experiment . . . would make the humanitarian shudder.”

Under current guidelines for human subjects research, such an experiment would be conducted more slowly, possibly with fewer participants, and with more rigorous guidelines, if it were conducted at all. There were all kinds of minefields to consider: allergic reactions (which had happened with Brodie’s vaccine), quality control of the millions of doses that would be required, and unknown underlying conditions of the children, to name a few.

Nervous parents may have disliked not knowing whether their child had received the vaccine, but fear of years in an iron lung or permanent disability led an overwhelming number of them to sign their children up as “Polio Pioneers,” as they were promoted by the March of Dimes and in popular media.

Sign encouraging people to get the polio vaccine, ca 1955.

“Pioneer” invoked heroic voluntary action, and parents signed forms in which they “requested” that their children participate. Their “request” implied that to receive the vaccine was a privilege afforded only to a few, which it was in the eyes of many parents in 1954. More than 60% of parents assented to the double-blind trials, and almost 70% to the observed control trials. Extensive publicity and in-school presentations stressed the benefits of the trial, whether or not a parent’s child received the vaccine.

Although parents consented, the ethical framework around the field trials had not much advanced since the 1930s. The Nuremberg Code, a product of the Doctors’ Trial of Nazi doctors and officials following World War II, had set guidelines for human experimentation, but these were voluntary and had not been codified into American law. (This would not happen until the 1970s.)

But parents trusted science, and particularly trusted Salk, a family man and the public face of the campaign who had been on the cover of Time. In addition, American adults in the 1950s had grandparents who may have had typhoid or smallpox, or parents who had had diphtheria or who died of pneumonia or other bacterial infections. Those had all been largely conquered in the previous 75 years or so with the development of vaccines, antitoxins, and antibiotics.

The Salk family at the University of Michigan the day before the Salk vaccine was publicly declared safe and effective, April 1955.

As we all know, the Salk injected polio vaccine worked, showing 80% to 90% effectiveness. Thomas Francis announced the results of the field trials in April 1955, and the vaccine was licensed soon after. Bruce Fye, the physician and Polio Pioneer, comments, “The fact that [the field trial] worked out gives it a lot of cover” when assessing this history today.

What may not be remembered so well is how quickly Salk’s shots were usurped.

Within a few years, an oral, live-virus vaccine (OPV) developed by Albert Sabin reached the market; by 1965 it had mostly supplanted the Salk injected vaccine.

Sabin’s vaccine had a number of advantages. For one, the OPV confers protection via the intestines rather than the blood and is therefore more effective, particularly in areas of epidemics. That’s because polio is communicated orally, mainly through contaminated water. (Parents in the 1950s were right to be cautious about summer swimming holes as sources of the disease.)

Furthermore, the nature of the OPV aided in establishing herd immunity. Contact with an OPV-inoculated person can provoke immunity by means of a mild, often undetectable, infection even in someone who has not been vaccinated.

Finally, the OPV had the additional advantages of being cheaper to produce and easier to administer.

Detail from a Kenyan public health flyer, 2000.

Vaccination programs built around the OPV were wildly successful. As countries and then regions declared polio eliminated, it seemed reasonable in 1988 for the World Health Organization to set a goal of certifying the world as polio-free by 2005, following the procedures of mass vaccination that eliminated smallpox by 1980. However, achieving this goal proved more difficult than the WHO envisaged, and pockets of polio persist in Asia and Africa.

And while the OPV was critical to the 99% drop in polio cases worldwide since 1988, it has also brought its own problems.

In June 1999 a U.S. federal advisory panel recommended the OPV be abandoned in favor of the injected killed-virus vaccine. What caused this dramatic change in policy?

It has long been known that live-virus polio vaccines carry the risk of transmitting the disease they are meant to prevent, because vaccine virus particles are excreted in stool and then transmitted by contaminated water or dirty hands. Even if the virus does not back-mutate into a more virulent form, the weakened version can in some cases be sufficient to cause serious disease. Because the OPV contains live virus, a few children every year in the United States contracted polio from OPV. Although the risk is low—one case for every 2.4 million doses—it is real.

After natural, or wild, polio ceased to exist in the United States, elimination of vaccine-caused polio became a reasonable goal. The United States switched back to the Salk vaccine in 2000, but the OPV continues to be used elsewhere because of its ease of use and the broad protection it affords.

Of the three serotypes of polio that had been identified in the late 1940s, only type 1 remains in circulation. In 2015 wild polio type 2 was declared globally eradicated. To eliminate the risk of contracting type 2 from the OPV, the WHO directed countries around the world to employ oral vaccines that no longer contained type 2.

However, the turbulent state of the world, particularly in places where polio still exists, meant that this directive was not fully followed, and pockets of vaccine-related type 2 remain, particularly in places with poor sanitation and where the population is not fully vaccinated. As the WHO states, “the lower the population immunity, the longer this virus survives and the more genetic changes it undergoes,” becoming more infectious and more virulent.

A quality that made the OPV preferable—its ability to provide herd immunity by circulating among the unvaccinated—has come back to bite it. The Rockland County case was a type 2 virus.

Global travel, which revived as the COVID-19 pandemic eased, can carry these new strains around the world, including to New York. In 2022 the United States became one of 30 countries identified as having vaccine-derived poliovirus in circulation—not from vaccines administered in the United States, but from elsewhere. Because many think polio has been vanquished, not enough people in places like Rockland County are protected with perfectly safe dead viruses. Now such communities are potential breeding grounds for a resurgence of live viruses.

We have come a long way from the fearful parents of 1954, whose trust in science was, it turns out, not misplaced. If the Rockland County victim had been vaccinated with Salk’s vaccine, he would not have contracted polio.

Nonetheless, the case shows us that science is complicated and messy and that combating infectious diseases has become a battle of information and ideology more than a race to innovation. Ironically, science’s success since the Polio Pioneers has been part of its undoing, changing our relationship with scientific authority and making many of us skeptical that monstrous pandemics can still lurk in the shadows.

Volunteering one’s child in 1954 for an experimental vaccine required a leap of faith that many today might find impossible. The fear those parents felt is largely absent from our lives. Opportunists have filled that void with the specter of misinformation and visions of a science that is secretive and self-serving. Even in the face of a global society paralyzed by COVID-19, this skepticism persisted. Must science truly fail us before we’ll finally appreciate it?

We have been given many long-proven tools to prevent and treat infectious diseases. The question is whether, like the Polio Pioneers and their parents, we have the confidence in science to use them.

Some of the material in this article is taken from the author’s book Experimenting with Humans and Animals: From Aristotle to CRISPR.